Answer this: what’s the first thing that comes to mind when you want to increase your website rankings?

SEO? Content? Backlinks?

Sure, those are all important.

But there are two principles that are often taken for granted by a lot of marketers that affect your rank. These are crawlability and indexability.

You often hear these words thrown around so I want to touch on them a little here.

Crawlability and Indexability: How Do They Affect Rankings

Search engines like Google don’t magically just show you those webpages after you type in your query.

Behind the scenes, these search engines have bots (also called spiders or crawlers) that scour the internet for content.

Crawlability describes the search engine’s ability to access and crawl content on a page.

This is another big topic that requires its own post. But the main takeaway is this: if your website or parts of it can’t be crawled by these spiders, they won’t get indexed. And if they aren’t indexed, they won’t show up on search engines.

Watch this video below to understand How Search Works:

After these bots crawl the content, they are then indexed and stored into the search engine’s servers. As Matt says at the start of the video, when you search on Google, “you aren’t actually searching the web. You’re searching Google’s index of the web.”

Indexability refers to the search engine’s ability to analyze and add a page to its index.

This means if you posted a new page, or updated an old blog post, those changes will not reflect on search engines until they get crawled and indexed.

I’ll write another article next time on what you should do when Google can’t crawl and/or index your website.

Below, we’ll take a look at two sure-fire ways to request Google to index your new page(s) or to re-index some pages you’ve updated. As a high-level overview:

- Use method 1 if you made changes to many URLs or pages

- Use method 2 if you made changes to one or few URLs

Method 1: Submit a Sitemap in Google Search Console

The easiest way to ask Google to crawl and index your website is by submitting a sitemap in Google Search Console.

First, make sure that you have already created a Google Search Console account and added your website.

Next, if you are using WordPress, you should add one of the recommended SEO plugins. The popular names these days are Rank Math and Yoast. These plugins will automatically create the sitemap for you.

Step 1: Get Your Sitemap URL

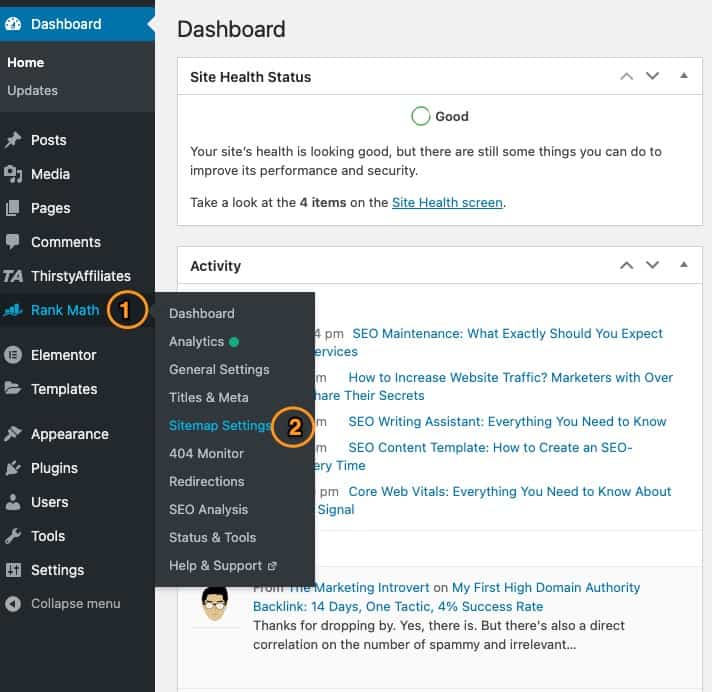

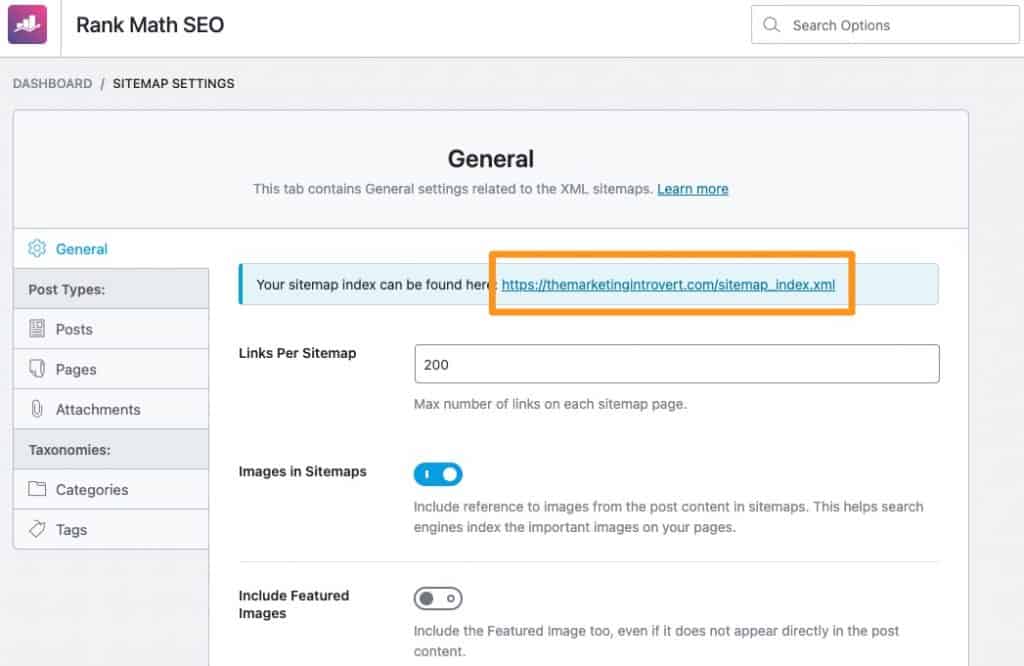

Enter your WordPress dashboard and head on over to the sitemap settings. Here’s how it may look like if you’re using Rank Math.

You’ll see a screen with your sitemap index/URL. Copy that, then proceed to the next step.

Step 2: Add the Sitemap to Google Search Console

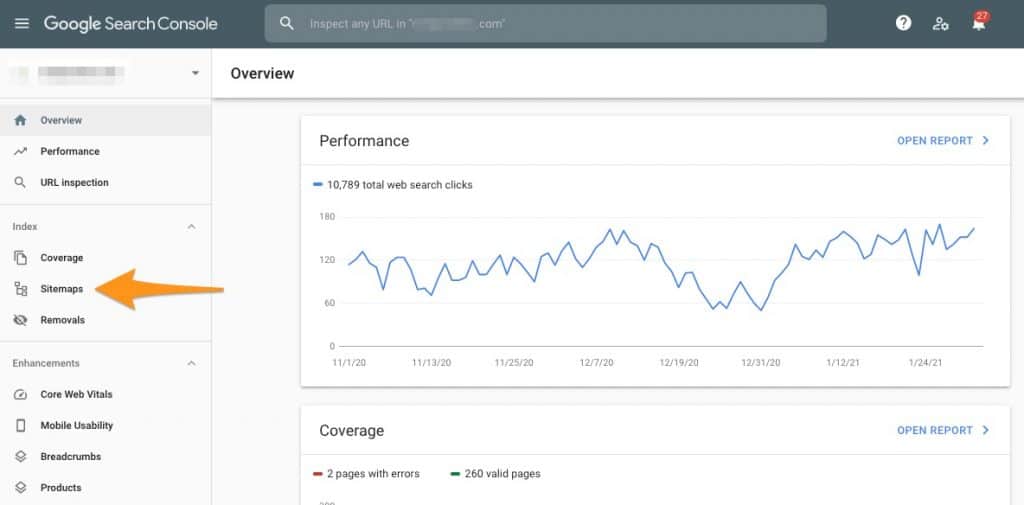

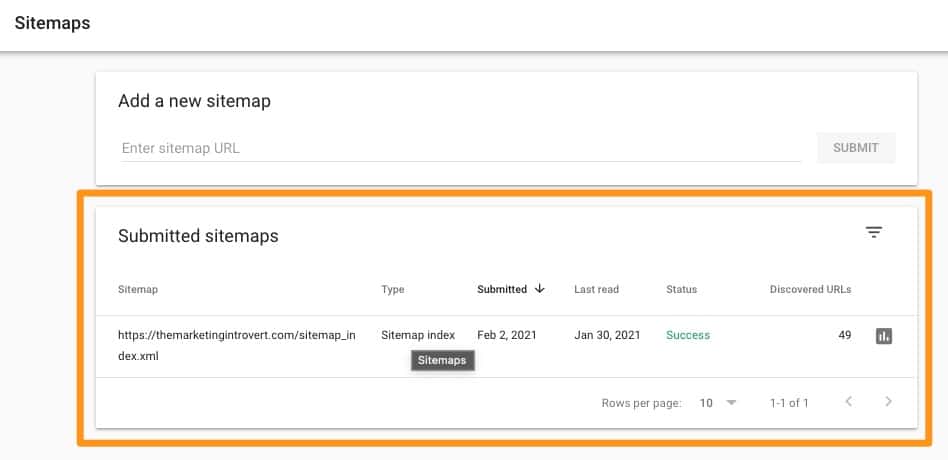

Go to Google Search Console and click on sitemaps from the menu.

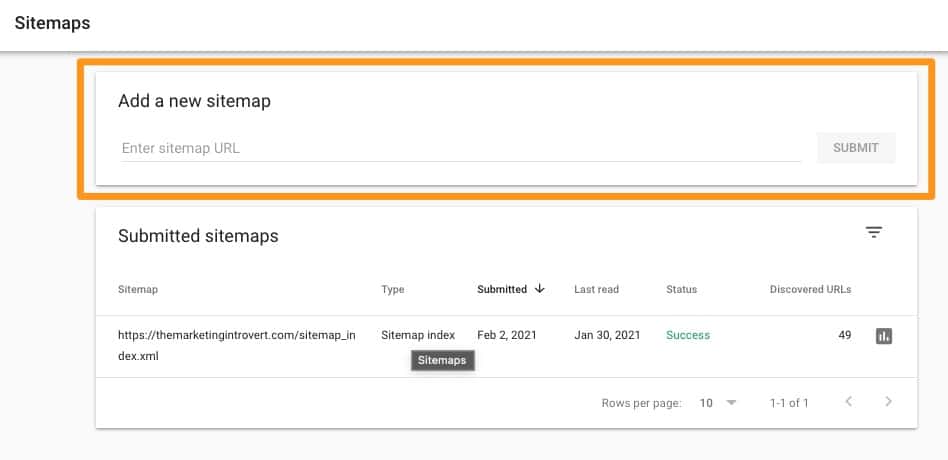

Add the sitemap index URL you copied from step 1, then hit submit.

After adding the sitemap, you’ll see the status below it. In the example below, you’ll see that I last re-submitted my sitemap on 2 Feb, but was last read by Google on 30 Jan.

This means that if I made any changes in the last few days, those aren’t reflected yet because Google hasn’t re-indexed my pages.

Step 3: Check for Errors

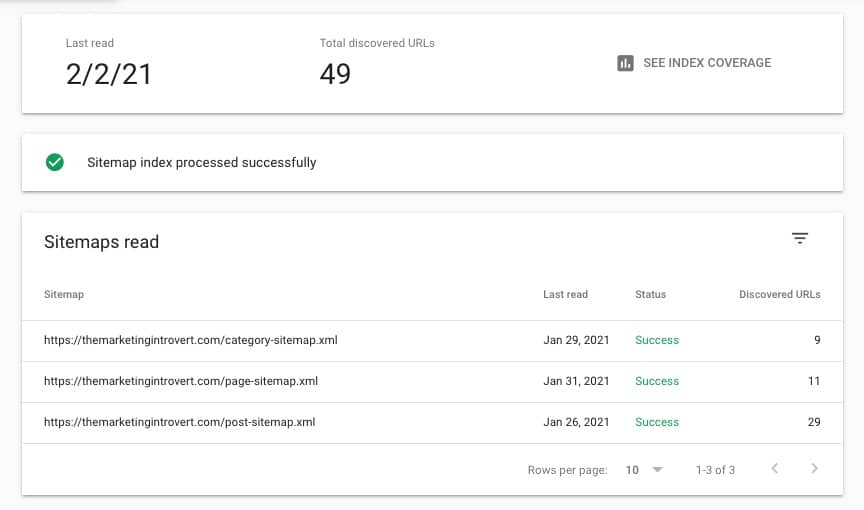

Click on the submitted sitemap to see if it contains errors or not. If this is a relatively new website and you didn’t make any unnecessary modifications to the settings, you shouldn’t see any errors.

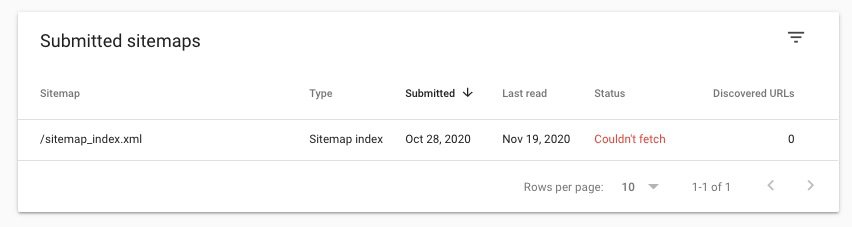

But in some cases, you might not see a “success” in the status. Here’s an example:

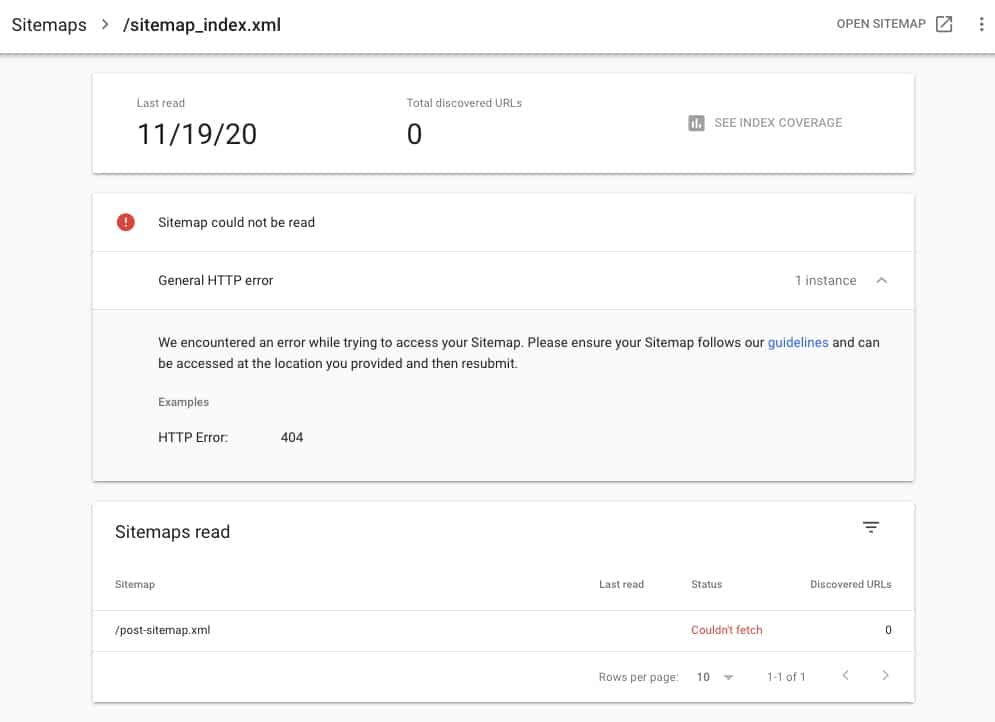

Then if you click on it, Google will tell you what the error is and offer some help to resolve it.

I’ll write another article on troubleshooting common errors you’ll find here next time.

Remember: if your site can’t be crawled, it won’t be indexed. If it’s not indexed, it won’t appear on search engines. So if you see errors here, it’s a must that you resolve them as quickly as possible.

Method 2: Use the URL Inspection Tool

If you only update one post, then using the URL inspection tool can be faster. Here’s how you do it.

Step 1: Get the URL of the Page You Want to Be Re-indexed

Let’s say you just published a new blog post. As mentioned above, because it’s not yet crawled and indexed, it won’t appear on search engines yet.

After publishing, copy the URL then head on over to Google Search Console.

Step 2: Enter the URL in the Inspection Tool

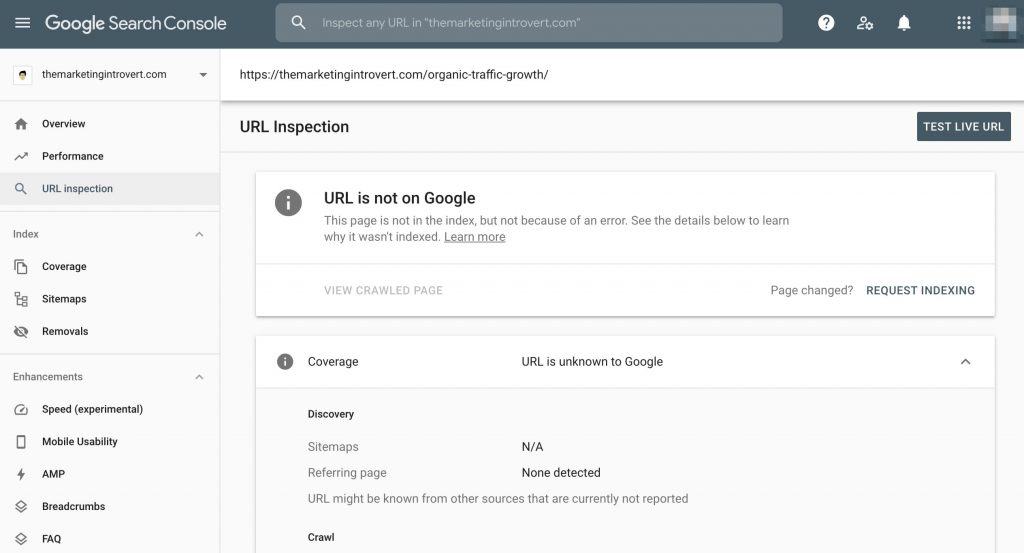

After logging in, paste the link in the URL inspection tool at the top. It will then tell you that the URL is not on Google.

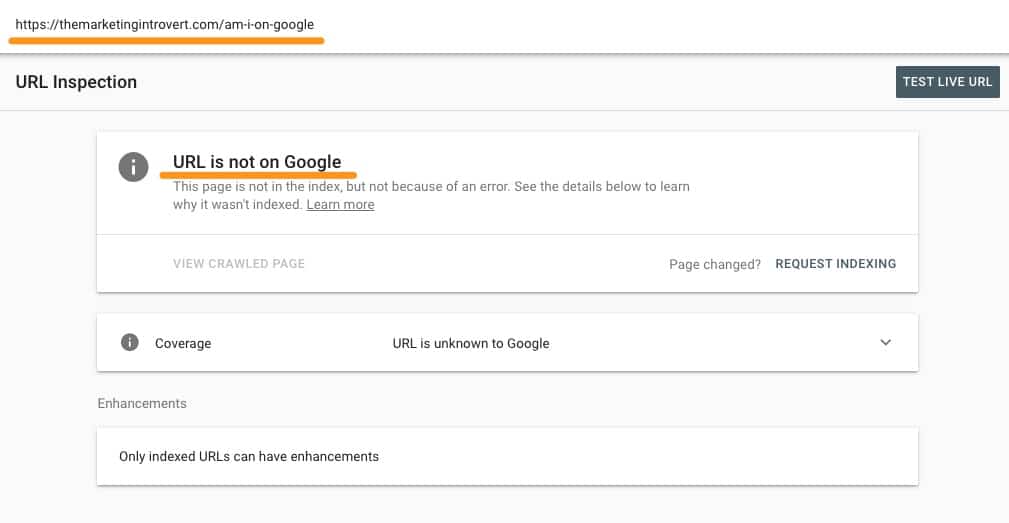

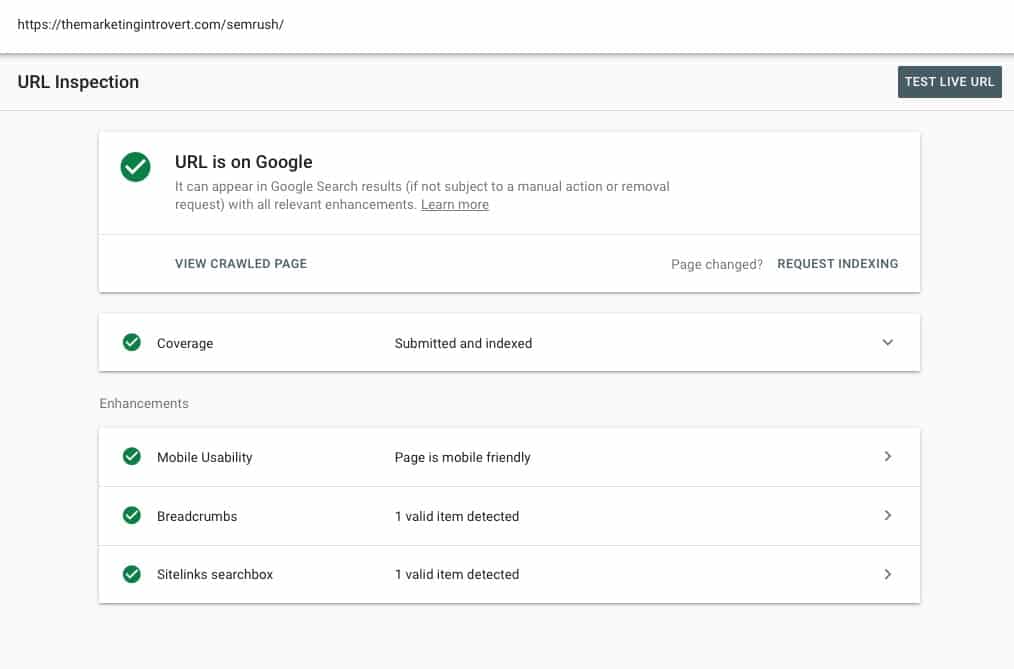

On the other hand, if you updated a page on your site and want it to be re-indexed, you will see something similar.

Step 3: Request Manual Indexing

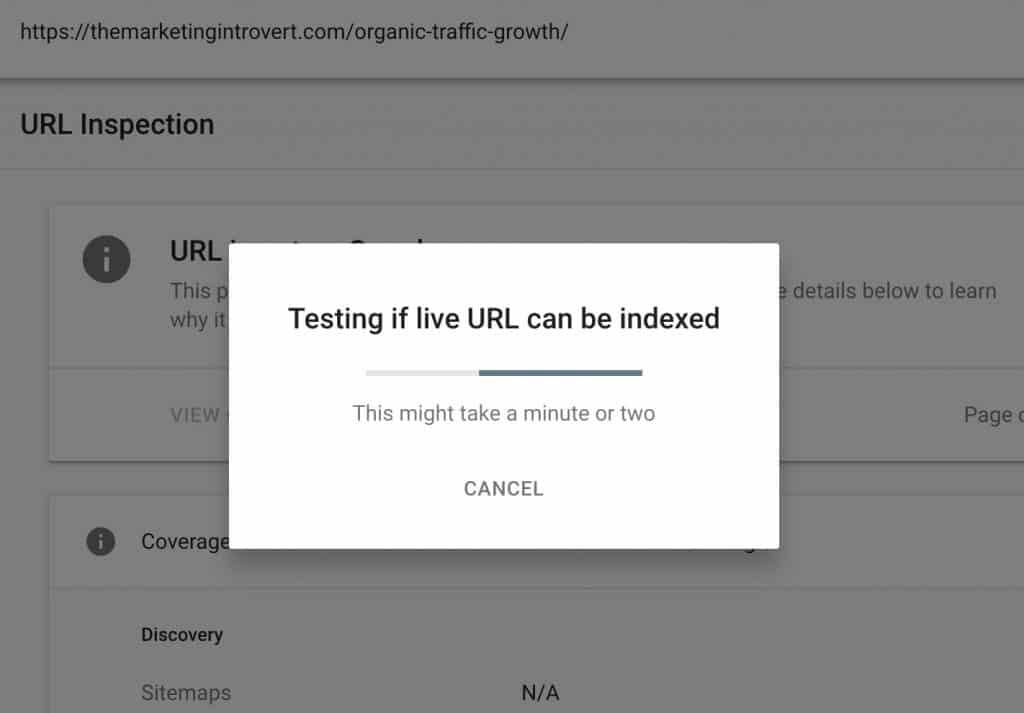

In both cases, the last step is to manually request indexing by clicking that button. Google will show a notification to see if the page can be indexed.

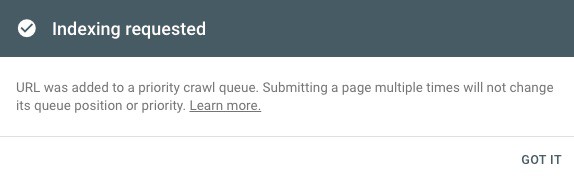

If there are no errors, you will see another notification saying that the page has been added to a crawl queue. It also says that re-submitting the URL multiple times won’t make this process go faster.

At this point, all you have to do is just wait. It might take a few hours to a few weeks before your changes reflect.

Since this is out of your hands, focus on other areas like creating more high-quality content or optimizing your website speed.

How to Check if My Pages are Crawled and Indexed

To check if your pages appear on Google, all you have to do is enter the URL or page you want to check in the URL Inspection Tool. It will show you if the page is on Google or not.

Note that just because it appears on Google doesn’t mean you’ll find it on the first page. Ranking is different from indexability.

Frequently Asked Questions

How long does crawling take?

Crawling is different for different websites. It may take a few minutes to a few weeks depending on several factors.

Will re-submitting my page in the URL inspection tool get my page indexed faster?

No. Submitting the URL several times is only a waste of your time. Google already confirms that this will not change its queue position or priority.

What is my page not showing up on Google?

There are many factors affecting this, but the first thing you need to check are crawlability and indexability. If Google can’t crawl your pages, it can’t index them. If it can’t be indexed, it will not show on on Google.

Do I have to submit my sitemap to Google?

Theoretically, no. Google will find crawl and find your pages by itself. Doing so just helps speed up that process. Adding a sitemap doesn’t have any effect on rankings itself according to several studies.